human-in-the-loop

Human in the Loop examines where and how human judgment is intentionally preserved inside automated and AI-driven systems. The concept is often discussed loosely, but rarely defined with precision.

A true human-in-the-loop system includes a clear point of intervention where a person can review, override, or redirect system behavior before execution. Without that authority, the human role becomes observational rather than structural.

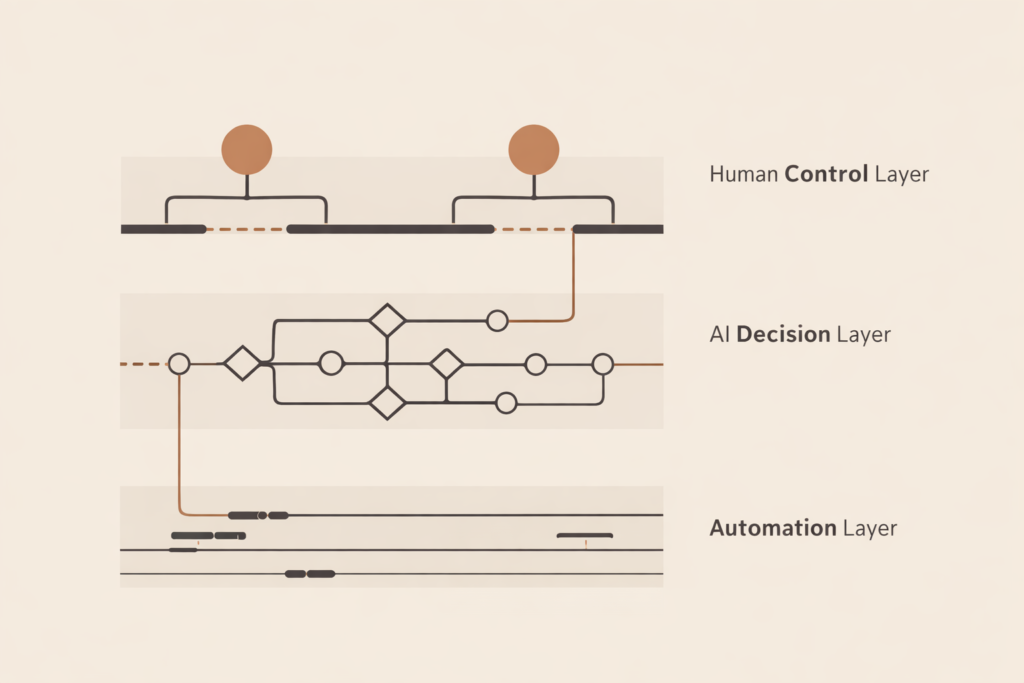

This tag explores how human oversight is implemented, where it fails, and how systems can be designed to maintain accountability. It focuses on decision checkpoints, escalation paths, and the limits of automation.

Topics include review systems, approval layers, override mechanisms, and the difference between supervision and control.

Human in the Loop is not about slowing systems down. It is about ensuring that responsibility remains anchored to a human decision when it matters most.

Education & SkillsAI hiring systems rank candidates before humans review them. If the system decides who rises, then hiring authority has already shifted. This breakdown shows where control actually sits and why structure matters.

Education & SkillsHuman control systems keep automation and AI accountable. When execution, decision-making, and authority blur, responsibility disappears into the system.