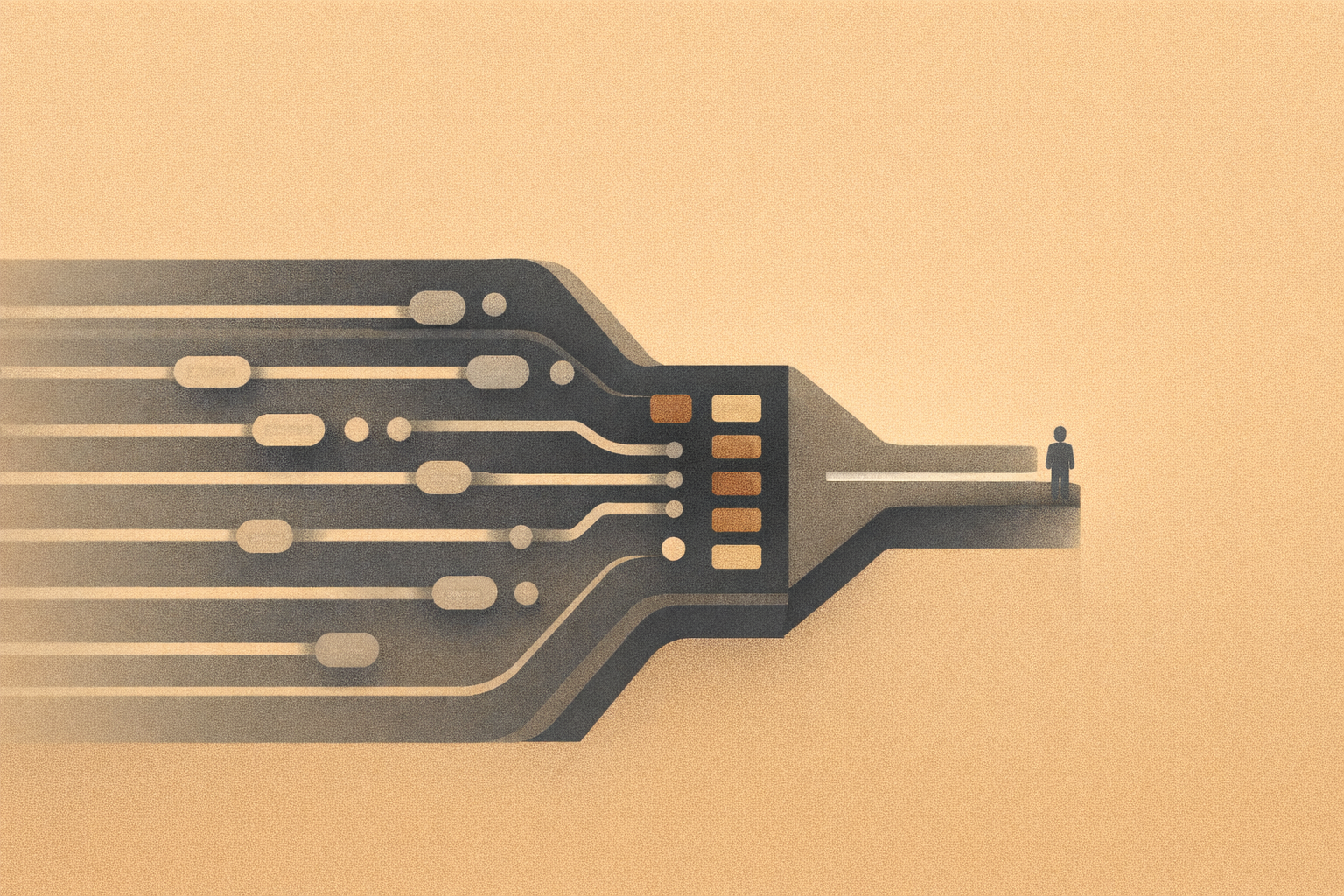

AI hiring systems are now the first gatekeeper in the job process. Before a human reads a resume, the system has already filtered, ranked, and prioritized candidates. As a result, the decision is often shaped before it is reviewed.

This is not a future concern. It is current structure.

AI Hiring Systems Start With Automation

Every hiring pipeline begins with automation. Applications are collected, formatted, and routed through systems designed to handle volume. In addition, scheduling, confirmations, and communication are automated to reduce workload.

This layer answers one question: what happens next. It moves candidates through the system without pause. However, automation does not evaluate quality. Instead, it processes inputs based on predefined rules.

This is where automation discipline matters. Without clear constraints, automation pushes flawed inputs forward at scale.

AI Hiring Systems Decide Who Rises

The second layer is where authority begins to shift. AI hiring systems analyze resumes, score candidates, and rank them against job requirements. For example, keywords, experience patterns, and inferred signals determine who moves forward.

This is not neutral. The system makes decisions about relevance, fit, and priority.

At this stage, the system answers a different question: what should happen. Therefore, it determines which candidates are visible and which are filtered out.

This is why AI decision systems must be examined closely. If the ranking model is flawed, the outcome fails before any human review begins.

Where Is the Human in the Loop

Most hiring processes claim human oversight. However, that oversight often happens after the system narrows the field. Recruiters review a shortlist that AI has already shaped.

This creates a structural problem. The human is present, but the human is not deciding. Instead, the system has already determined who is worth attention.

A true human in the loop system allows meaningful override. It enables a recruiter to surface overlooked candidates and question the ranking logic. Without that authority, the human role becomes observational.

Where AI Hiring Systems Break

AI hiring systems fail quietly. They do not produce obvious errors. Instead, they produce patterns that go unchallenged.

- Qualified candidates are filtered out before review

- Ranking logic reflects incomplete or biased signals

- Human reviewers inherit the system’s assumptions

Each step appears functional. However, together they create a narrow pipeline that limits opportunity without clear accountability.

The Structure of Hiring Control

Disciplined hiring systems separate execution, decision, and control.

- Automation handles process flow

- AI supports evaluation without owning the final decision

- Humans retain authority to override and question results

If that structure is not present, the system is hiring, not the organization.

This is why The Structure of Control matters. It defines where responsibility remains visible and enforceable.

AI hiring systems should increase efficiency. However, they should not replace judgment. Control must remain human, or accountability disappears.

Part of the Tech as Discipline series