Recommendation systems shape what you see before you decide what to look for. By the time you scroll, search, or click, the system has already filtered and arranged your options.

This is not random. It is structured attention.

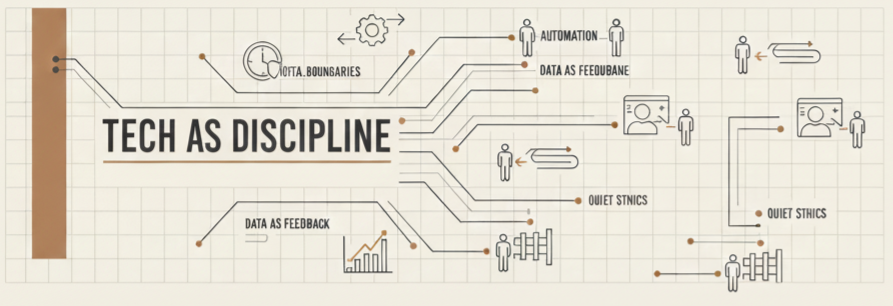

Recommendation Systems Start With Automation

Every platform begins with automation. Content is collected, categorized, and distributed across the system. User activity is tracked continuously. Clicks, watch time, pauses, and interactions are recorded and processed.

This layer answers one question: what is happening. It captures behavior and feeds it forward. However, automation does not decide meaning. It only organizes signals.

This is why automation discipline matters. Without clear structure, raw behavior becomes distorted input.

Recommendation Systems Decide What You See

The second layer introduces decision logic. Recommendation systems analyze behavior and predict what will keep you engaged. They rank content, prioritize visibility, and shape the order of what appears.

This is not passive sorting. The system is actively selecting your inputs.

At this stage, the system answers a different question: what should be seen. It determines which content surfaces and which remains hidden.

This is why AI decision systems require scrutiny. If the model optimizes only for engagement, it will narrow perspective while increasing intensity.

Where the Human Disappears

Most users believe they are choosing what they consume. In reality, the system has already framed the options. The feed feels open, but it is structured.

The human interacts with what is presented, not with the full field of possibilities. That difference matters.

A true human in the loop structure would allow users to meaningfully adjust or override the system. Instead, most platforms offer limited control while maintaining algorithmic authority.

Where Recommendation Systems Break

Recommendation systems fail through repetition, not collapse.

- Content loops reinforce the same signals

- Exposure narrows over time

- Behavior becomes shaped by prior outputs

Each step appears relevant. However, relevance can become restriction when variation disappears.

The system does not need to remove choice. It only needs to reduce visibility.

The Structure of Attention Control

Disciplined systems separate data collection, decision logic, and user control.

- Automation captures behavior accurately

- AI ranks content without locking perspective

- Humans retain the ability to reset or expand inputs

If that structure is missing, the system shapes attention without resistance.

This is why The Structure of Control matters. It defines how authority operates across systems.

Recommendation systems should guide discovery. They should not define perception. When control disappears, attention becomes directed rather than chosen.

→ The Cost of Convenience

→ When Systems Make Decisions for You

→ Designing Friction on Purpose

→ Keeping Humans in the Loop

→ The Structure of Control

Tech as Discipline focuses on control, not convenience. If you do not understand how inputs are selected, you cannot claim full ownership of your attention.