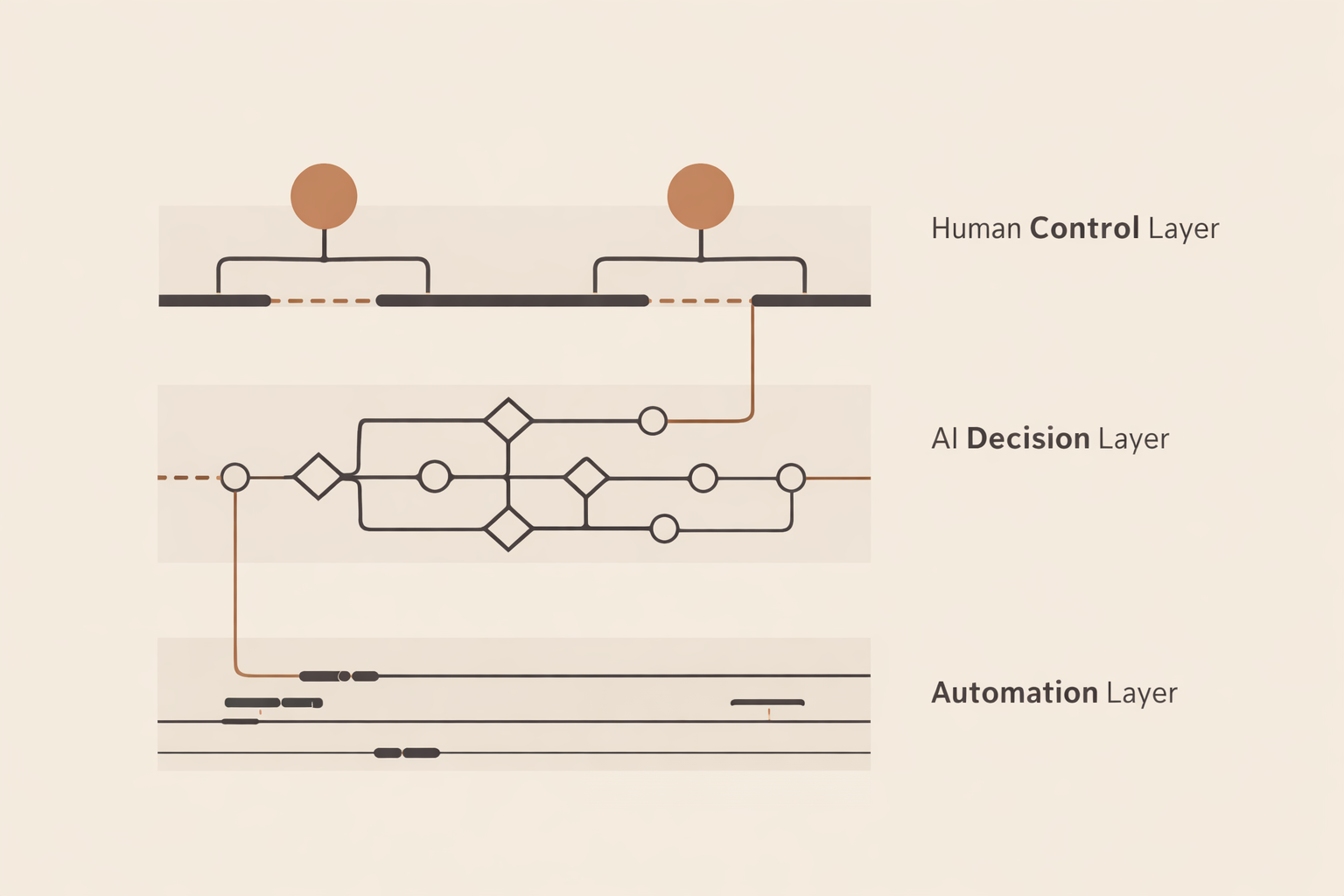

Human control systems determine whether automation serves people or quietly replaces judgment. Automation runs tasks. AI shapes decisions. Humans are supposed to control outcomes. When those layers blur, accountability disappears.

This is not a technology problem. It is a structure problem.

Human Control Systems Require Layered Boundaries

Most systems fail in one of three places: execution, decision-making, or control. Automation determines what runs. AI determines what is prioritized. Humans are supposed to determine what is allowed.

When those functions are not clearly separated, responsibility starts to dissolve. Small errors stop looking like decisions and start looking like accidents. That is how drift becomes normal.

Automation Runs the System

Automation handles execution. It moves tasks forward without pause. It increases speed, reduces effort, and removes repetition.

However, automation does not understand context. It follows rules. When those rules are wrong, automation scales the error. As a result, a local mistake can become a system-wide problem very quickly.

Automation answers one question: What happens next?

This is why Automation Discipline exists. It keeps execution visible, constrained, and intentional.

AI Decides What Matters

AI introduces a different layer. It does not just execute. It prioritizes, recommends, ranks, and influences outcomes.

Over time, that shifts how decisions are made. The system begins to shape judgment rather than simply support it.

AI answers a different question: What should happen?

This is why AI Decision Systems matters. It examines what happens when systems begin to influence judgment without owning the consequences.

Humans Must Retain Authority

The final layer is control. This is where many systems quietly break.

Humans are often present. However, presence is not authority. Watching a system is not the same as controlling it.

A real control point includes:

- Clear intervention: a defined moment where action can be taken

- Real authority: the ability to override or redirect

- Accountability: ownership of the outcome

This is the function of Human in the Loop. It defines where human judgment is structural rather than symbolic.

Where Human Control Systems Break

Most failures are structural, not dramatic.

- Automation runs without oversight

- AI prioritizes without accountability

- Humans observe without authority

Each layer may appear functional on its own. Together, they can still produce drift. In practice, that drift makes failure harder to detect until the damage compounds.

According to the NIST AI Risk Management Framework, trustworthy systems require governance, accountability, and ongoing human oversight. That is not a branding preference. It is structural necessity.

The Discipline of Structure

Disciplined systems do not reject automation or AI. Instead, they define limits.

- Automation is constrained

- AI is questioned

- Humans remain accountable

This is what Tech as Discipline ultimately enforces.

Technology should increase capability without removing responsibility. Strong human control systems make that possible.